Before

starting VxRail infra upgrade you need to go with pre-validation test using

vCenter and command line using VxRail Manager.

Please take some time to review the Customer Preparation Guide

KB:

https://www.dell.com/support/kbdoc/en-us/000200768

Recommendation:

VxRail Engineering performed a data analysis, which has shown

that 92% of upgrades complete with no issue when the ESXi nodes are proactively

rebooted. This will identify VM's with potential vMotion issues, ESXi

maintenance mode issues, reboot issues and refreshes all ESXi services.

Therefore, RPS are recommending customers to perform a rolling

reboot on the ESXi nodes, several days before the VxRail Upgrade (Customer

Task).

If a customer has any issues during the reboots, they can open

an SR with the VxRail Support team to address an issue.

Additionally, your Upgrade Engineer will also reboot all Service VM's (VxRail

Manager, vCenter** & PSC**) and reset iDRAC on all nodes prior to starting

the upgrade.

**Only if VxRail Managed.

1. Run Skyline Health

Login to Vxrail

vCenter -> Cluster -> Monitor

Under the

vSAN Run Skyline

2. Check Resyncing

Objects

Login to VxRail

vCenter -> Cluster -> Monitor

Under the

vSAN -> Resyncing Objects

If all

object has already resync then it’s fine if not, then run the Resync from

Configuration.

3. Change VxRail cluster heartbeat duration

to repair Object, default it is set to 60 Minutes.

Change it to

300Minutes or more to avoid object sync during node isolation.

Login to VxRail

vCenter -> Cluster -> Configuration

Under the

vSAN -> Service -> Advanced Option

Click Edit

and set Object repair timer.

4. Enable VxRail health Monitoring.

Login to VxRail

vCenter using root credentials and below command to check health status.

Command ->

vsan.whatif_host_failures 0

Download

vxverify_XXX_XX_XXX.phy file

Open vxverify_XXX_XXX_XX

file using phython

Once you

will run this command, VxRail manager will start collecting health report like

below.

In preparation for your upcoming upgrade event, note the

below known items:

Schedule the upgrade for a time outside of your peak I/O load,

as performance degradation may occur during the migration of VMs while

individual nodes or hosts are being upgraded.

Since VMs are vMotioned as part of the upgrade, ensure that

VMs are available to be vMotioned in advance. Examples of issues which may prevent

vMotion:

VM with an ISO mounted.

VM with external storage locally mounted

VM pinned to a host (Affinity rules)

Download the latest ISO from dell portal for VxRail upgrade.

https://dl.dell.com/downloads/PG9N6_VxRail-8.0.110-Composite-Upgrade-Package-for-7.0.x.zip

Mount the ISO in VxRail cluster

Login to vCenter -> Select Cluster -> Configuration

Under VxRail -> Updates -> Local Updates

Select Update Bundle and Upload

Once ISO Image upload complete click start.

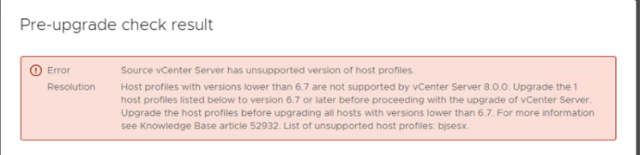

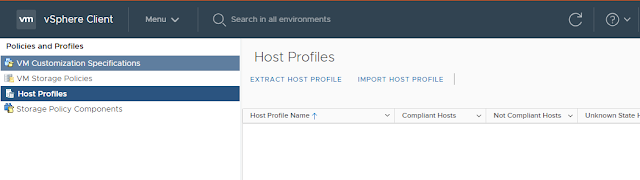

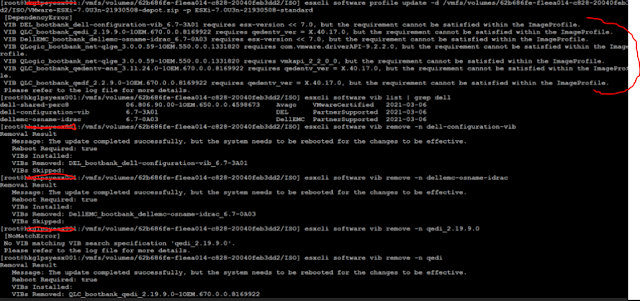

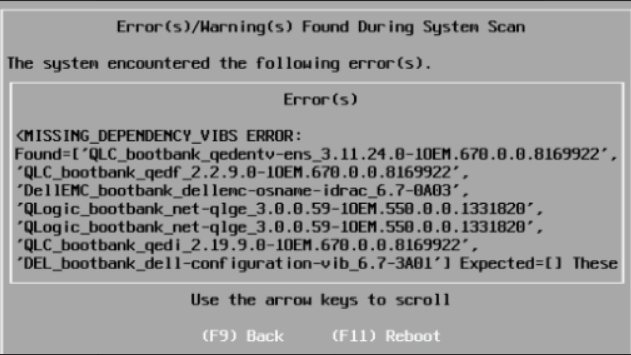

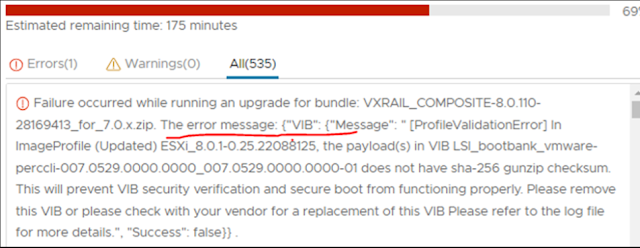

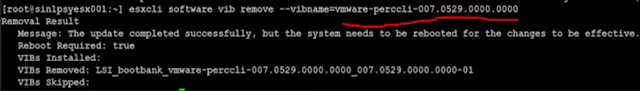

Once you click start it will go with Precheck -> Scan and then Update.

Now all the tasks will complete auto. first will upgrade vCenter -> ESXI host.

It will prompt to enter Temp IP that will be assign temporarily to vCenter during upgrade.